Fun Fact:

The first data center built specifically for AI training — not general computing — was commissioned in 2013. It was considered absurdly oversized at the time. Within four years, it was considered embarrassingly small.

AI hardware is no longer a supporting actor in the tech narrative — it’s the constraint that everything else runs into eventually.

For the past two years, the AI conversation has revolved almost entirely around models. New architectures appear frequently. Benchmarks climb. Costs per token fall. Each release promises efficiency gains that seem to cancel out the limitations of the previous generation. That narrative still dominates headlines, conference stages, and investor decks. It’s familiar, comfortable, and easy to sell.

But beneath it, something quieter — and far more limiting — has been taking shape.

The next phase of AI will not be decided by who trains the smartest model. It will be decided by who can actually run AI at scale, day after day, without stressing the systems that make it possible. That question has very little to do with prompts or interfaces. It lives deeper, inside hardware limits, infrastructure tradeoffs, and energy constraints that no amount of clever software can simply wish away.

The Illusion of Endless Model Progress

On paper, AI progress still looks exponential. But in practice, it has started to feel heavier — almost slower, despite the headlines.

Training a new model is no longer the hardest part. The real challenge begins after the model works, when it has to be deployed, stabilized, monitored, and kept alive under real-world load. At scale, infrastructure complexity now rivals the neural network itself. Sometimes it even overshadows it.

Large AI systems depend on physical realities that software abstractions cannot hide forever — dense GPU clusters packed into limited space, memory bandwidth struggling to keep pace with compute, cooling systems operating close to thermal limits, energy supply negotiated years in advance, and physical land, permits, and zoning approvals that no algorithm can accelerate.

These are not software problems waiting for optimization. They are physics problems — and physics does not scale at the speed of hype, no matter how confident the roadmap sounds.

When Compute Isn’t the Problem, Memory Is

One of the least discussed constraints in modern AI is not raw compute power — it’s memory movement.

Processing units have grown dramatically more powerful. Memory bandwidth has not kept pace. As models grow larger, the cost of moving data between memory and processor becomes a bottleneck that raw FLOPs cannot overcome. A chip can be extraordinarily fast and still spend most of its time waiting.

This is why high-bandwidth memory has become one of the most contested components in the semiconductor supply chain. Future gains may come less from clever algorithms and more from physical design choices — stacking memory vertically, shortening data paths, reducing latency by margins that used to feel irrelevant.

At scale, those margins suddenly matter more than the benchmark on the box.

GPUs Were Inevitable — But They’re No Longer Enough

GPUs earned their central role in the AI boom. Their parallel architecture fit early deep-learning workloads almost perfectly, and their flexibility made them the obvious choice for a field that was still figuring out what it needed.

That dominance came with tradeoffs nobody wanted to talk about while the momentum was good.

As AI moved from research to production, companies ran into an uncomfortable reality: relying exclusively on general-purpose GPUs at scale is expensive, inefficient, and strategically limiting. If you don’t control the silicon, you don’t fully control your costs, your margins, or your roadmap. Over time, that lack of control compounds in ways that become very hard to unwind.

This has triggered a quiet but decisive shift toward application-specific chips — not because it sounds futuristic, but because the math leaves little choice. The AI hardware conversation has shifted from “what can we build” to “what can we actually sustain” — and that shift changes everything about how the next cycle will be won.

If you’re looking at how major networks are positioning themselves in 2026, this deep dive comparing The AI Energy Crisis: Why 2026 Is the Year of the “Electric Wall” provides useful context for why the next wave of AI will be constrained less by benchmarks and more by physical infrastructure:

https://techfusiondaily.com/ai-energy-crisis-electric-wall-2026/

The Fragility of the Silicon Supply Chain

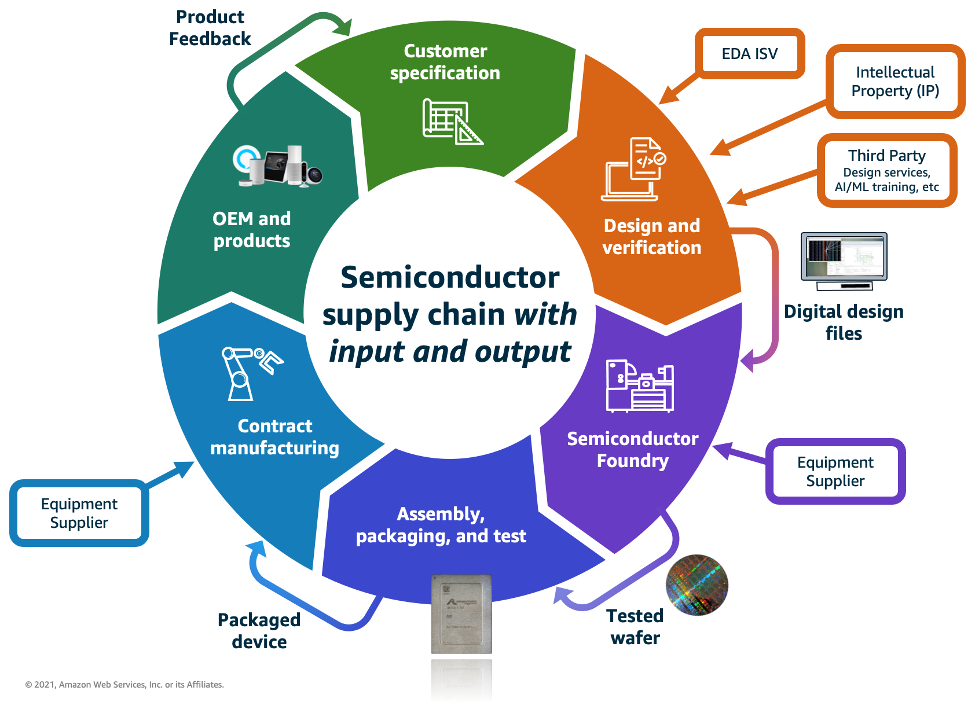

There is another reality that rarely makes it into optimistic AI narratives: the advanced chip supply chain is astonishingly concentrated.

A small number of companies control the most critical steps. If one advanced fabrication facility goes offline, timelines slip everywhere. If one link breaks, entire product strategies stall — not for days, but for quarters.

This fragility explains why governments are treating semiconductor manufacturing as a matter of national security rather than industrial policy. It also explains why “digital sovereignty” is no longer abstract — it’s operational. Countries that can’t fabricate advanced chips are discovering they can’t fully control their AI roadmaps either.

AI ambition now depends on geopolitical stability in ways software teams rarely had to factor into planning before. A model can be trained anywhere. The hardware it runs on cannot be sourced from just anywhere — and that asymmetry is only becoming more pronounced.

Energy: The Constraint That Refuses to Stay Invisible

Every large AI system eventually runs into the same question — usually later than it should: where does the electricity come from?

AI inference consumes far more energy than traditional computing. As AI hardware scales to support always-on, real-time, globally accessible products, power demand stops being a rounding error and starts becoming a design constraint that shapes every other decision.

Some companies are exploring nuclear partnerships. Others are building data centers next to renewable clusters. In some regions, permits are denied unless sustainability plans are proven in advance. None of this is distant planning — it’s happening now, in active negotiations, on timelines that the software roadmap didn’t account for.

AI is not just a compute problem. It is an energy problem — and energy moves slowly, regardless of how fast software iterates.

Edge AI: Shifting Intelligence Closer to the User

One response to mounting cloud pressure is pushing computation closer to users — and it’s more practical than it sounds.

Smartphones now ship with dedicated neural processing units. Laptops include on-device accelerators. Consumer hardware is quietly absorbing workloads that once required remote servers, reducing latency and improving privacy in the process.

But it introduces new constraints: efficiency, heat, and battery life. Every watt suddenly matters in ways that datacenter design never had to care about. The winner of the next cycle may not be the most powerful chip — it may be the one that delivers useful intelligence without turning devices into pocket heaters.

Heat Is Becoming the Real Enemy

As chips grow denser, heat becomes the limiting factor that no benchmark mentions.

Traditional air cooling is nearing its practical limits. Data centers are moving toward liquid cooling, immersion systems, and complex thermal management strategies that would have sounded extreme just a decade ago. At this point, designing an AI hardware facility looks less like software engineering and more like industrial thermodynamics.

You can scale compute. You cannot negotiate with heat.

Scaling Has Physical Boundaries

Even if compute, memory, and energy all improve, AI still faces limits that don’t bend for roadmaps.

Latency cannot beat the speed of light. Heat must be dissipated. Geography determines where data centers can exist. These constraints don’t stop progress — but they shape it in ways that optimistic projections rarely acknowledge upfront. They decide which ideas survive contact with reality and which ones quietly disappear between the announcement and the launch.

AI Is Turning Into a Utility

At scale, AI begins to resemble a utility more than a product.

Every inference has a cost. Every user adds load. Every new feature increases energy demand on infrastructure that was already stretched. The companies that survive this transition will be the ones that think in terms of infrastructure economics — not just model performance or benchmark leadership.

AI is no longer just software. It is a physical economy with physical constraints, and those constraints compound over time in ways that quarterly earnings calls don’t capture.

The Question That Actually Matters

Models will continue to improve. Benchmarks will rise. Interfaces will get smoother.

But the next tech cycle will not belong to whoever builds the smartest AI. It will belong to whoever can keep it running — reliably, efficiently, and at a cost structure that doesn’t collapse under its own weight.

The real question is no longer about breakthroughs. It’s about endurance.

Who will have the power — literally — to sustain the AI future everyone is racing toward?

Sources

TechFusionDaily — Original editorial analysis

Originally published at TechFusionDaily by Nelson Contreras https://techfusiondaily.com

Last updated: March 5, 2026

7 thoughts on “Why AI Hardware — Not Models — Will Decide the Next Tech Cycle”